From Pilot to Production: Why the Hard Work Starts After the Demo

Building an AI demo is easy. You take clean data, design a controlled scenario, and show exactly what you want people to see. It looks impressive. And it bears very little resemblance to what production deployment actually involves.

Real data is messy. It changes every day. It has quality issues, gaps, and inconsistencies that no controlled prototype ever has to deal with. Workflows that looked simple on a whiteboard turn out to have dozens of edge cases that nobody documented because they lived in the heads of experienced staff.

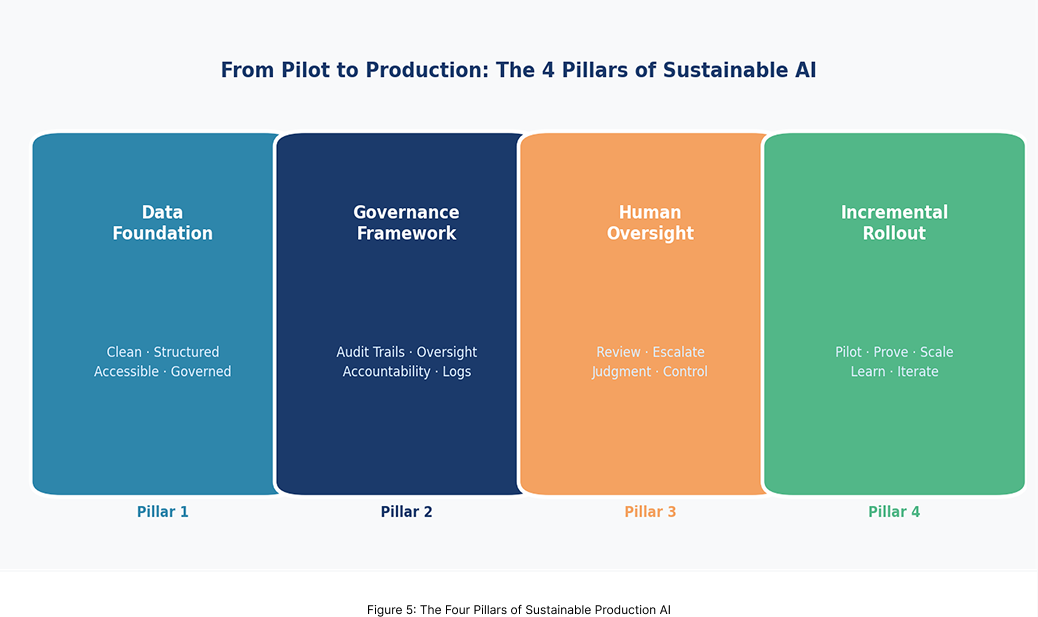

Build on a Solid Data Foundation

Agents are only as intelligent as the information they can access. If your data is siloed, inconsistent, or poorly governed, your agents will reflect that. Making enterprise data available in a way that agents can actually use — clean, structured, accessible, and trustworthy — is foundational work that determines whether your investment delivers or disappoints.

Govern from the Start, Not as an Afterthought

In regulated industries, the question of who is accountable for an agent’s decision is not theoretical. You need clear answers about how decisions are made, how they are logged, who can override them, and what happens when something goes wrong. Organizations that build governance in from the beginning move faster, not slower.

Design for Human Oversight in the Right Places

Production-grade Agentic AI is not about removing people from the loop. It is about removing them from the parts of the loop where they add no value, so they can apply genuine judgment where it matters. The goal is to remove friction, not accountability.

Implement Incrementally, Always

A comprehensive blueprint is essential. But your roadmap should move step by step. Start where the benefit is highest and the complexity is lowest. Prove it. Make the results visible. Let each success build the confidence and institutional knowledge that makes the next phase easier.

Production AI is not a launch event. It is a discipline. The organizations building that discipline now are the ones that will look back in three years and wonder how their competitors let themselves fall so far behind.